Evaluation of a proposal for reliable low-cost grid power with 100% wind, water, and solar. By Christopher T. M Clack, Staffan A. Qvist, Jay Apt,, Morgan Bazilian, Adam R. Brandt, Ken Caldeira, Steven J. Davis, Victor Diakov, Mark A. Handschy, Paul D. H. Hines, Paulina Jaramillo, Daniel M. Kammen, Jane C. S. Long, M. Granger Morgan, Adam Reed, Varun Sivaram, James Sweeney, George R. Tynan, David G. Victor, John P. Weyant, and Jay F. Whitacre. Proceedings of the National Academy of Sciences. http://www.pnas.org/content/early/2017/06/16/1610381114.full

Significance: Previous analyses have found that the most feasible route to a low-carbon energy future is one that adopts a diverse portfolio of technologies. In contrast, Jacobson et al. (2015) consider whether the future primary energy sources for the United States could be narrowed to almost exclusively wind, solar, and hydroelectric power and suggest that this can be done at “low-cost” in a way that supplies all power with a probability of loss of load “that exceeds electric-utility-industry standards for reliability”. We find that their analysis involves errors, inappropriate methods, and implausible assumptions. Their study does not provide credible evidence for rejecting the conclusions of previous analyses that point to the benefits of considering a broad portfolio of energy system options. A policy prescription that overpromises on the benefits of relying on a narrower portfolio of technologies options could be counterproductive, seriously impeding the move to a cost effective decarbonized energy system.

Abstract: A number of analyses, meta-analyses, and assessments, including those performed by the Intergovernmental Panel on Climate Change, the National Oceanic and Atmospheric Administration, the National Renewable Energy Laboratory, and the International Energy Agency, have concluded that deployment of a diverse portfolio of clean energy technologies makes a transition to a low-carbon-emission energy system both more feasible and less costly than other pathways. In contrast, Jacobson et al. [Jacobson MZ, Delucchi MA, Cameron MA, Frew BA (2015) Proc Natl Acad Sci USA 112(49):15060–15065] argue that it is feasible to provide “low-cost solutions to the grid reliability problem with 100% penetration of WWS [wind, water and solar power] across all energy sectors in the continental United States between 2050 and 2055”, with only electricity and hydrogen as energy carriers. In this paper, we evaluate that study and find significant shortcomings in the analysis. In particular, we point out that this work used invalid modeling tools, contained modeling errors, and made implausible and inadequately supported assumptions. Policy makers should treat with caution any visions of a rapid, reliable, and low-cost transition to entire energy systems that relies almost exclusively on wind, solar, and hydroelectric power.

Showing posts with label junk science. Show all posts

Showing posts with label junk science. Show all posts

Monday, July 10, 2017

Thursday, June 22, 2017

Evaluation of a proposal for reliable low-cost grid power with 100% wind, water, and solar

Evaluation of a proposal for reliable low-cost grid power with 100% wind, water, and solar. By Christopher T. M Clack, Staffan A. Qvist, Jay Apt,, Morgan Bazilian, Adam R. Brandt, Ken Caldeira, Steven J. Davis, Victor Diakov, Mark A. Handschy, Paul D. H. Hines, Paulina Jaramillo, Daniel M. Kammen, Jane C. S. Long, M. Granger Morgan, Adam Reed, Varun Sivaram, James Sweeney, George R. Tynan, David G. Victor, John P. Weyant, and Jay F. Whitacre. Proceedings of the National Academy of Sciences. http://www.pnas.org/content/early/2017/06/16/1610381114.full

Significance: Previous analyses have found that the most feasible route to a low-carbon energy future is one that adopts a diverse portfolio of technologies. In contrast, Jacobson et al. (2015) consider whether the future primary energy sources for the United States could be narrowed to almost exclusively wind, solar, and hydroelectric power and suggest that this can be done at “low-cost” in a way that supplies all power with a probability of loss of load “that exceeds electric-utility-industry standards for reliability”. We find that their analysis involves errors, inappropriate methods, and implausible assumptions. Their study does not provide credible evidence for rejecting the conclusions of previous analyses that point to the benefits of considering a broad portfolio of energy system options. A policy prescription that overpromises on the benefits of relying on a narrower portfolio of technologies options could be counterproductive, seriously impeding the move to a cost effective decarbonized energy system.

Abstract: A number of analyses, meta-analyses, and assessments, including those performed by the Intergovernmental Panel on Climate Change, the National Oceanic and Atmospheric Administration, the National Renewable Energy Laboratory, and the International Energy Agency, have concluded that deployment of a diverse portfolio of clean energy technologies makes a transition to a low-carbon-emission energy system both more feasible and less costly than other pathways. In contrast, Jacobson et al. [Jacobson MZ, Delucchi MA, Cameron MA, Frew BA (2015) Proc Natl Acad Sci USA 112(49):15060–15065] argue that it is feasible to provide “low-cost solutions to the grid reliability problem with 100% penetration of WWS [wind, water and solar power] across all energy sectors in the continental United States between 2050 and 2055”, with only electricity and hydrogen as energy carriers. In this paper, we evaluate that study and find significant shortcomings in the analysis. In particular, we point out that this work used invalid modeling tools, contained modeling errors, and made implausible and inadequately supported assumptions. Policy makers should treat with caution any visions of a rapid, reliable, and low-cost transition to entire energy systems that relies almost exclusively on wind, solar, and hydroelectric power.

Significance: Previous analyses have found that the most feasible route to a low-carbon energy future is one that adopts a diverse portfolio of technologies. In contrast, Jacobson et al. (2015) consider whether the future primary energy sources for the United States could be narrowed to almost exclusively wind, solar, and hydroelectric power and suggest that this can be done at “low-cost” in a way that supplies all power with a probability of loss of load “that exceeds electric-utility-industry standards for reliability”. We find that their analysis involves errors, inappropriate methods, and implausible assumptions. Their study does not provide credible evidence for rejecting the conclusions of previous analyses that point to the benefits of considering a broad portfolio of energy system options. A policy prescription that overpromises on the benefits of relying on a narrower portfolio of technologies options could be counterproductive, seriously impeding the move to a cost effective decarbonized energy system.

Abstract: A number of analyses, meta-analyses, and assessments, including those performed by the Intergovernmental Panel on Climate Change, the National Oceanic and Atmospheric Administration, the National Renewable Energy Laboratory, and the International Energy Agency, have concluded that deployment of a diverse portfolio of clean energy technologies makes a transition to a low-carbon-emission energy system both more feasible and less costly than other pathways. In contrast, Jacobson et al. [Jacobson MZ, Delucchi MA, Cameron MA, Frew BA (2015) Proc Natl Acad Sci USA 112(49):15060–15065] argue that it is feasible to provide “low-cost solutions to the grid reliability problem with 100% penetration of WWS [wind, water and solar power] across all energy sectors in the continental United States between 2050 and 2055”, with only electricity and hydrogen as energy carriers. In this paper, we evaluate that study and find significant shortcomings in the analysis. In particular, we point out that this work used invalid modeling tools, contained modeling errors, and made implausible and inadequately supported assumptions. Policy makers should treat with caution any visions of a rapid, reliable, and low-cost transition to entire energy systems that relies almost exclusively on wind, solar, and hydroelectric power.

Monday, June 5, 2017

Healthy offspring from freeze-dried mouse spermatozoa held on the International Space Station for 9 months

Healthy offspring from freeze-dried mouse spermatozoa held on the International Space Station for 9 months. Sayaka Wakayama et al. Proceedings of the National Academy of Sciences, May 22 2017. http://www.pnas.org/content/early/2017/05/16/1701425114.full

Abstract: If humans ever start to live permanently in space, assisted reproductive technology using preserved spermatozoa will be important for producing offspring; however, radiation on the International Space Station (ISS) is more than 100 times stronger than that on Earth, and irradiation causes DNA damage in cells and gametes. Here we examined the effect of space radiation on freeze-dried mouse spermatozoa held on the ISS for 9 mo at –95 °C, with launch and recovery at room temperature. DNA damage to the spermatozoa and male pronuclei was slightly increased, but the fertilization and birth rates were similar to those of controls. Next-generation sequencing showed only minor genomic differences between offspring derived from space-preserved spermatozoa and controls, and all offspring grew to adulthood and had normal fertility. Thus, we demonstrate that although space radiation can damage sperm DNA, it does not affect the production of viable offspring after at least 9 mo of storage on the ISS.

Saturday, June 3, 2017

Do Globalization and Free Markets Drive Obesity among Children and Youth? An Empirical Analysis, 1990–2013

Do Globalization and Free Markets Drive Obesity among Children and Youth? An Empirical Analysis, 1990–2013

ABSTRACT: Scholars of public health identify globalization as a major cause of obesity. Free markets are blamed for spreading high calorie, nutrient-poor diets, and sedentary lifestyles across the globe. Global trade and investment agreements apparently curtail government action in the interest of public health. Globalization is also blamed for raising income inequality and social insecurities, which contribute to “obesogenic” environments. Contrary to recent empirical studies, this study demonstrates that globalization and several component parts, such as trade openness, FDI flows, and an index of economic freedom, reduce weight gain and obesity among children and youth, the most likely age cohort to be affected by the past three decades of globalization and attendant lifestyle changes. The results suggest strongly that local-level factors possibly matter much more than do global-level factors for explaining why some people remain thin and others put on weight. The proposition that globalization is homogenizing cultures across the globe in terms of diets and lifestyles is possibly exaggerated. The results support the proposition that globalized countries prioritize health because of the importance of labor productivity and human capital due to heightened market competition, ceteris paribus, even if rising incomes might drive high consumption.

KEYWORDS: Globalization, obesity, trade and FDI, economic freedom

Indra de Soysa & Ann Kristin de Soysa

International Interactions

Vol. 0 , Iss. 0,0

http://www.tandfonline.com/doiABSTRACT: Scholars of public health identify globalization as a major cause of obesity. Free markets are blamed for spreading high calorie, nutrient-poor diets, and sedentary lifestyles across the globe. Global trade and investment agreements apparently curtail government action in the interest of public health. Globalization is also blamed for raising income inequality and social insecurities, which contribute to “obesogenic” environments. Contrary to recent empirical studies, this study demonstrates that globalization and several component parts, such as trade openness, FDI flows, and an index of economic freedom, reduce weight gain and obesity among children and youth, the most likely age cohort to be affected by the past three decades of globalization and attendant lifestyle changes. The results suggest strongly that local-level factors possibly matter much more than do global-level factors for explaining why some people remain thin and others put on weight. The proposition that globalization is homogenizing cultures across the globe in terms of diets and lifestyles is possibly exaggerated. The results support the proposition that globalized countries prioritize health because of the importance of labor productivity and human capital due to heightened market competition, ceteris paribus, even if rising incomes might drive high consumption.

KEYWORDS: Globalization, obesity, trade and FDI, economic freedom

Monday, December 26, 2016

What scientists think of themselves, other scientists and the population at large

Who Believes in the Storybook Image of the Scientist?

http://www.tandfonline.com/doi/full/10.1080/08989621.2016.1268922

Dec 2016

Abstract: Do lay people and scientists themselves recognize that scientists are human and therefore prone to human fallibilities such as error, bias, and even dishonesty? In a series of three experimental studies and one correlational study (total N = 3,278) we found that the ‘storybook image of the scientist’ is pervasive: American lay people and scientists from over 60 countries attributed considerably more objectivity, rationality, open-mindedness, intelligence, integrity, and communality to scientists than other highly-educated people. Moreover, scientists perceived even larger differences than lay people did. Some groups of scientists also differentiated between different categories of scientists: established scientists attributed higher levels of the scientific traits to established scientists than to early-career scientists and PhD students, and higher levels to PhD students than to early-career scientists. Female scientists attributed considerably higher levels of the scientific traits to female scientists than to male scientists. A strong belief in the storybook image and the (human) tendency to attribute higher levels of desirable traits to people in one’s own group than to people in other groups may decrease scientists’ willingness to adopt recently proposed practices to reduce error, bias and dishonesty in science.

http://www.tandfonline.com/doi/full/10.1080/08989621.2016.1268922

Dec 2016

Abstract: Do lay people and scientists themselves recognize that scientists are human and therefore prone to human fallibilities such as error, bias, and even dishonesty? In a series of three experimental studies and one correlational study (total N = 3,278) we found that the ‘storybook image of the scientist’ is pervasive: American lay people and scientists from over 60 countries attributed considerably more objectivity, rationality, open-mindedness, intelligence, integrity, and communality to scientists than other highly-educated people. Moreover, scientists perceived even larger differences than lay people did. Some groups of scientists also differentiated between different categories of scientists: established scientists attributed higher levels of the scientific traits to established scientists than to early-career scientists and PhD students, and higher levels to PhD students than to early-career scientists. Female scientists attributed considerably higher levels of the scientific traits to female scientists than to male scientists. A strong belief in the storybook image and the (human) tendency to attribute higher levels of desirable traits to people in one’s own group than to people in other groups may decrease scientists’ willingness to adopt recently proposed practices to reduce error, bias and dishonesty in science.

Tuesday, December 6, 2016

My Unhappy Life as a Climate Heretic. By Roger Pielke Jr.

My Unhappy Life as a Climate Heretic. By Roger Pielke Jr.

My research was attacked by thought police in journalism, activist groups funded by billionaires and even the White House.http://www.wsj.com/articles/my-unhappy-life-as-a-climate-heretic-1480723518

Updated Dec. 2, 2016 7:04 p.m. ET

Much to my surprise, I showed up in the WikiLeaks releases before the election. In a 2014 email, a staffer at the Center for American Progress, founded by John Podesta in 2003, took credit for a campaign to have me eliminated as a writer for Nate Silver’s FiveThirtyEight website. In the email, the editor of the think tank’s climate blog bragged to one of its billionaire donors, Tom Steyer: “I think it’s fair [to] say that, without Climate Progress, Pielke would still be writing on climate change for 538.”

WikiLeaks provides a window into a world I’ve seen up close for decades: the debate over what to do about climate change, and the role of science in that argument. Although it is too soon to tell how the Trump administration will engage the scientific community, my long experience shows what can happen when politicians and media turn against inconvenient research—which we’ve seen under Republican and Democratic presidents.

I understand why Mr. Podesta—most recently Hillary Clinton’s campaign chairman—wanted to drive me out of the climate-change discussion. When substantively countering an academic’s research proves difficult, other techniques are needed to banish it. That is how politics sometimes works, and professors need to understand this if we want to participate in that arena.

More troubling is the degree to which journalists and other academics joined the campaign against me. What sort of responsibility do scientists and the media have to defend the ability to share research, on any subject, that might be inconvenient to political interests—even our own?

I believe climate change is real and that human emissions of greenhouse gases risk justifying action, including a carbon tax. But my research led me to a conclusion that many climate campaigners find unacceptable: There is scant evidence to indicate that hurricanes, floods, tornadoes or drought have become more frequent or intense in the U.S. or globally. In fact we are in an era of good fortune when it comes to extreme weather. This is a topic I’ve studied and published on as much as anyone over two decades. My conclusion might be wrong, but I think I’ve earned the right to share this research without risk to my career.

Instead, my research was under constant attack for years by activists, journalists and politicians. In 2011 writers in the journal Foreign Policy signaled that some accused me of being a “climate-change denier.” I earned the title, the authors explained, by “questioning certain graphs presented in IPCC reports.” That an academic who raised questions about the Intergovernmental Panel on Climate Change in an area of his expertise was tarred as a denier reveals the groupthink at work.

Yet I was right to question the IPCC’s 2007 report, which included a graph purporting to show that disaster costs were rising due to global temperature increases. The graph was later revealed to have been based on invented and inaccurate information, as I documented in my book “The Climate Fix.” The insurance industry scientist Robert-Muir Wood of Risk Management Solutions had smuggled the graph into the IPCC report. He explained in a public debate with me in London in 2010 that he had included the graph and misreferenced it because he expected future research to show a relationship between increasing disaster costs and rising temperatures.

When his research was eventually published in 2008, well after the IPCC report, it concluded the opposite: “We find insufficient evidence to claim a statistical relationship between global temperature increase and normalized catastrophe losses.” Whoops.

The IPCC never acknowledged the snafu, but subsequent reports got the science right: There is not a strong basis for connecting weather disasters with human-caused climate change.

Yes, storms and other extremes still occur, with devastating human consequences, but history shows they could be far worse. No Category 3, 4 or 5 hurricane has made landfall in the U.S. since Hurricane Wilma in 2005, by far the longest such period on record. This means that cumulative economic damage from hurricanes over the past decade is some $70 billion less than the long-term average would lead us to expect, based on my research with colleagues. This is good news, and it should be OK to say so. Yet in today’s hyper-partisan climate debate, every instance of extreme weather becomes a political talking point.

For a time I called out politicians and reporters who went beyond what science can support, but some journalists won’t hear of this. In 2011 and 2012, I pointed out on my blog and social media that the lead climate reporter at the New York Times,Justin Gillis, had mischaracterized the relationship of climate change and food shortages, and the relationship of climate change and disasters. His reporting wasn’t consistent with most expert views, or the evidence. In response he promptly blocked me from his Twitter feed. Other reporters did the same.

In August this year on Twitter, I criticized poor reporting on the website Mashable about a supposed coming hurricane apocalypse—including a bad misquote of me in the cartoon role of climate skeptic. (The misquote was later removed.) The publication’s lead science editor, Andrew Freedman, helpfully explained via Twitter that this sort of behavior “is why you’re on many reporters’ ‘do not call’ lists despite your expertise.”

I didn’t know reporters had such lists. But I get it. No one likes being told that he misreported scientific research, especially on climate change. Some believe that connecting extreme weather with greenhouse gases helps to advance the cause of climate policy. Plus, bad news gets clicks.

Yet more is going on here than thin-skinned reporters responding petulantly to a vocal professor. In 2015 I was quoted in the Los Angeles Times, by Pulitzer Prize-winning reporter Paige St. John, making the rather obvious point that politicians use the weather-of-the-moment to make the case for action on climate change, even if the scientific basis is thin or contested.

Ms. St. John was pilloried by her peers in the media. Shortly thereafter, she emailed me what she had learned: “You should come with a warning label: Quoting Roger Pielke will bring a hailstorm down on your work from the London Guardian, Mother Jones, and Media Matters.”

Or look at the journalists who helped push me out of FiveThirtyEight. My first article there, in 2014, was based on the consensus of the IPCC and peer-reviewed research. I pointed out that the global cost of disasters was increasing at a rate slower than GDP growth, which is very good news. Disasters still occur, but their economic and human effect is smaller than in the past. It’s not terribly complicated.

That article prompted an intense media campaign to have me fired. Writers at Slate, Salon, the New Republic, the New York Times, the Guardian and others piled on.

In March of 2014, FiveThirtyEight editor Mike Wilson demoted me from staff writer to freelancer. A few months later I chose to leave the site after it became clear it wouldn’t publish me. The mob celebrated. ClimateTruth.org, founded by former Center for American Progress staffer Brad Johnson, and advised by Penn State’s Michael Mann, called my departure a “victory for climate truth.” The Center for American Progress promised its donor Mr. Steyer more of the same.

Yet the climate thought police still weren’t done. In 2013 committees in the House and Senate invited me to a several hearings to summarize the science on disasters and climate change. As a professor at a public university, I was happy to do so. My testimony was strong, and it was well aligned with the conclusions of the IPCC and the U.S. government’s climate-science program. Those conclusions indicate no overall increasing trend in hurricanes, floods, tornadoes or droughts—in the U.S. or globally.

In early 2014, not long after I appeared before Congress, President Obama’s science adviser John Holdren testified before the same Senate Environment and Public Works Committee. He was asked about his public statements that appeared to contradict the scientific consensus on extreme weather events that I had earlier presented. Mr. Holdren responded with the all-too-common approach of attacking the messenger, telling the senators incorrectly that my views were “not representative of the mainstream scientific opinion.” Mr. Holdren followed up by posting a strange essay, of nearly 3,000 words, on the White House website under the heading, “An Analysis of Statements by Roger Pielke Jr.,” where it remains today.

I suppose it is a distinction of a sort to be singled out in this manner by the president’s science adviser. Yet Mr. Holdren’s screed reads more like a dashed-off blog post from the nutty wings of the online climate debate, chock-full of errors and misstatements.

But when the White House puts a target on your back on its website, people notice. Almost a year later Mr. Holdren’s missive was the basis for an investigation of me by Arizona Rep. Raul Grijalva, the ranking Democrat on the House Natural Resources Committee. Rep. Grijalva explained in a letter to my university’s president that I was being investigated because Mr. Holdren had “highlighted what he believes were serious misstatements by Prof. Pielke of the scientific consensus on climate change.” He made the letter public.

The “investigation” turned out to be a farce. In the letter, Rep. Grijalva suggested that I—and six other academics with apparently heretical views—might be on the payroll of Exxon Mobil (or perhaps the Illuminati, I forget). He asked for records detailing my research funding, emails and so on. After some well-deserved criticism from the American Meteorological Society and the American Geophysical Union, Rep. Grijalva deleted the letter from his website. The University of Colorado complied with Rep. Grijalva’s request and responded that I have never received funding from fossil-fuel companies. My heretical views can be traced to research support from the U.S. government.

But the damage to my reputation had been done, and perhaps that was the point. Studying and engaging on climate change had become decidedly less fun. So I started researching and teaching other topics and have found the change in direction refreshing. Don’t worry about me: I have tenure and supportive campus leaders and regents. No one is trying to get me fired for my new scholarly pursuits.

But the lesson is that a lone academic is no match for billionaires, well-funded advocacy groups, the media, Congress and the White House. If academics—in any subject—are to play a meaningful role in public debate, the country will have to do a better job supporting good-faith researchers, even when their results are unwelcome. This goes for Republicans and Democrats alike, and to the administration of President-elect Trump.

Academics and the media in particular should support viewpoint diversity instead of serving as the handmaidens of political expediency by trying to exclude voices or damage reputations and careers. If academics and the media won’t support open debate, who will?

---

Mr. Pielke is a professor and director of the Sports Governance Center at the University of Colorado, Boulder. His most recent book is “The Edge: The Wars Against Cheating and Corruption in the Cutthroat World of Elite Sports” (Roaring Forties Press, 2016).

My research was attacked by thought police in journalism, activist groups funded by billionaires and even the White House.http://www.wsj.com/articles/my-unhappy-life-as-a-climate-heretic-1480723518

Updated Dec. 2, 2016 7:04 p.m. ET

Much to my surprise, I showed up in the WikiLeaks releases before the election. In a 2014 email, a staffer at the Center for American Progress, founded by John Podesta in 2003, took credit for a campaign to have me eliminated as a writer for Nate Silver’s FiveThirtyEight website. In the email, the editor of the think tank’s climate blog bragged to one of its billionaire donors, Tom Steyer: “I think it’s fair [to] say that, without Climate Progress, Pielke would still be writing on climate change for 538.”

WikiLeaks provides a window into a world I’ve seen up close for decades: the debate over what to do about climate change, and the role of science in that argument. Although it is too soon to tell how the Trump administration will engage the scientific community, my long experience shows what can happen when politicians and media turn against inconvenient research—which we’ve seen under Republican and Democratic presidents.

I understand why Mr. Podesta—most recently Hillary Clinton’s campaign chairman—wanted to drive me out of the climate-change discussion. When substantively countering an academic’s research proves difficult, other techniques are needed to banish it. That is how politics sometimes works, and professors need to understand this if we want to participate in that arena.

More troubling is the degree to which journalists and other academics joined the campaign against me. What sort of responsibility do scientists and the media have to defend the ability to share research, on any subject, that might be inconvenient to political interests—even our own?

I believe climate change is real and that human emissions of greenhouse gases risk justifying action, including a carbon tax. But my research led me to a conclusion that many climate campaigners find unacceptable: There is scant evidence to indicate that hurricanes, floods, tornadoes or drought have become more frequent or intense in the U.S. or globally. In fact we are in an era of good fortune when it comes to extreme weather. This is a topic I’ve studied and published on as much as anyone over two decades. My conclusion might be wrong, but I think I’ve earned the right to share this research without risk to my career.

Instead, my research was under constant attack for years by activists, journalists and politicians. In 2011 writers in the journal Foreign Policy signaled that some accused me of being a “climate-change denier.” I earned the title, the authors explained, by “questioning certain graphs presented in IPCC reports.” That an academic who raised questions about the Intergovernmental Panel on Climate Change in an area of his expertise was tarred as a denier reveals the groupthink at work.

Yet I was right to question the IPCC’s 2007 report, which included a graph purporting to show that disaster costs were rising due to global temperature increases. The graph was later revealed to have been based on invented and inaccurate information, as I documented in my book “The Climate Fix.” The insurance industry scientist Robert-Muir Wood of Risk Management Solutions had smuggled the graph into the IPCC report. He explained in a public debate with me in London in 2010 that he had included the graph and misreferenced it because he expected future research to show a relationship between increasing disaster costs and rising temperatures.

When his research was eventually published in 2008, well after the IPCC report, it concluded the opposite: “We find insufficient evidence to claim a statistical relationship between global temperature increase and normalized catastrophe losses.” Whoops.

The IPCC never acknowledged the snafu, but subsequent reports got the science right: There is not a strong basis for connecting weather disasters with human-caused climate change.

Yes, storms and other extremes still occur, with devastating human consequences, but history shows they could be far worse. No Category 3, 4 or 5 hurricane has made landfall in the U.S. since Hurricane Wilma in 2005, by far the longest such period on record. This means that cumulative economic damage from hurricanes over the past decade is some $70 billion less than the long-term average would lead us to expect, based on my research with colleagues. This is good news, and it should be OK to say so. Yet in today’s hyper-partisan climate debate, every instance of extreme weather becomes a political talking point.

For a time I called out politicians and reporters who went beyond what science can support, but some journalists won’t hear of this. In 2011 and 2012, I pointed out on my blog and social media that the lead climate reporter at the New York Times,Justin Gillis, had mischaracterized the relationship of climate change and food shortages, and the relationship of climate change and disasters. His reporting wasn’t consistent with most expert views, or the evidence. In response he promptly blocked me from his Twitter feed. Other reporters did the same.

In August this year on Twitter, I criticized poor reporting on the website Mashable about a supposed coming hurricane apocalypse—including a bad misquote of me in the cartoon role of climate skeptic. (The misquote was later removed.) The publication’s lead science editor, Andrew Freedman, helpfully explained via Twitter that this sort of behavior “is why you’re on many reporters’ ‘do not call’ lists despite your expertise.”

I didn’t know reporters had such lists. But I get it. No one likes being told that he misreported scientific research, especially on climate change. Some believe that connecting extreme weather with greenhouse gases helps to advance the cause of climate policy. Plus, bad news gets clicks.

Yet more is going on here than thin-skinned reporters responding petulantly to a vocal professor. In 2015 I was quoted in the Los Angeles Times, by Pulitzer Prize-winning reporter Paige St. John, making the rather obvious point that politicians use the weather-of-the-moment to make the case for action on climate change, even if the scientific basis is thin or contested.

Ms. St. John was pilloried by her peers in the media. Shortly thereafter, she emailed me what she had learned: “You should come with a warning label: Quoting Roger Pielke will bring a hailstorm down on your work from the London Guardian, Mother Jones, and Media Matters.”

Or look at the journalists who helped push me out of FiveThirtyEight. My first article there, in 2014, was based on the consensus of the IPCC and peer-reviewed research. I pointed out that the global cost of disasters was increasing at a rate slower than GDP growth, which is very good news. Disasters still occur, but their economic and human effect is smaller than in the past. It’s not terribly complicated.

That article prompted an intense media campaign to have me fired. Writers at Slate, Salon, the New Republic, the New York Times, the Guardian and others piled on.

In March of 2014, FiveThirtyEight editor Mike Wilson demoted me from staff writer to freelancer. A few months later I chose to leave the site after it became clear it wouldn’t publish me. The mob celebrated. ClimateTruth.org, founded by former Center for American Progress staffer Brad Johnson, and advised by Penn State’s Michael Mann, called my departure a “victory for climate truth.” The Center for American Progress promised its donor Mr. Steyer more of the same.

Yet the climate thought police still weren’t done. In 2013 committees in the House and Senate invited me to a several hearings to summarize the science on disasters and climate change. As a professor at a public university, I was happy to do so. My testimony was strong, and it was well aligned with the conclusions of the IPCC and the U.S. government’s climate-science program. Those conclusions indicate no overall increasing trend in hurricanes, floods, tornadoes or droughts—in the U.S. or globally.

In early 2014, not long after I appeared before Congress, President Obama’s science adviser John Holdren testified before the same Senate Environment and Public Works Committee. He was asked about his public statements that appeared to contradict the scientific consensus on extreme weather events that I had earlier presented. Mr. Holdren responded with the all-too-common approach of attacking the messenger, telling the senators incorrectly that my views were “not representative of the mainstream scientific opinion.” Mr. Holdren followed up by posting a strange essay, of nearly 3,000 words, on the White House website under the heading, “An Analysis of Statements by Roger Pielke Jr.,” where it remains today.

I suppose it is a distinction of a sort to be singled out in this manner by the president’s science adviser. Yet Mr. Holdren’s screed reads more like a dashed-off blog post from the nutty wings of the online climate debate, chock-full of errors and misstatements.

But when the White House puts a target on your back on its website, people notice. Almost a year later Mr. Holdren’s missive was the basis for an investigation of me by Arizona Rep. Raul Grijalva, the ranking Democrat on the House Natural Resources Committee. Rep. Grijalva explained in a letter to my university’s president that I was being investigated because Mr. Holdren had “highlighted what he believes were serious misstatements by Prof. Pielke of the scientific consensus on climate change.” He made the letter public.

The “investigation” turned out to be a farce. In the letter, Rep. Grijalva suggested that I—and six other academics with apparently heretical views—might be on the payroll of Exxon Mobil (or perhaps the Illuminati, I forget). He asked for records detailing my research funding, emails and so on. After some well-deserved criticism from the American Meteorological Society and the American Geophysical Union, Rep. Grijalva deleted the letter from his website. The University of Colorado complied with Rep. Grijalva’s request and responded that I have never received funding from fossil-fuel companies. My heretical views can be traced to research support from the U.S. government.

But the damage to my reputation had been done, and perhaps that was the point. Studying and engaging on climate change had become decidedly less fun. So I started researching and teaching other topics and have found the change in direction refreshing. Don’t worry about me: I have tenure and supportive campus leaders and regents. No one is trying to get me fired for my new scholarly pursuits.

But the lesson is that a lone academic is no match for billionaires, well-funded advocacy groups, the media, Congress and the White House. If academics—in any subject—are to play a meaningful role in public debate, the country will have to do a better job supporting good-faith researchers, even when their results are unwelcome. This goes for Republicans and Democrats alike, and to the administration of President-elect Trump.

Academics and the media in particular should support viewpoint diversity instead of serving as the handmaidens of political expediency by trying to exclude voices or damage reputations and careers. If academics and the media won’t support open debate, who will?

---

Mr. Pielke is a professor and director of the Sports Governance Center at the University of Colorado, Boulder. His most recent book is “The Edge: The Wars Against Cheating and Corruption in the Cutthroat World of Elite Sports” (Roaring Forties Press, 2016).

Saturday, May 10, 2014

China moves to free-market pricing for pharmaceuticals, after price controls led to quality problems & shortages

China Scraps Price Caps on Low-Cost Drugs. By Laurie Burkitt

Move Comes After Some Manufacturers Cut Corners on Production

Wall Street Journal, May 8, 2014 1:15 a.m.

http://online.wsj.com/news/articles/SB10001424052702304655304579548933340544044

Beijing

China will scrap caps on retail prices for low-cost medicine and is moving toward free-market pricing for pharmaceuticals, after price controls led to drug quality problems and shortages in the country.

The move could be a welcome one for global pharmaceutical companies, which have been under scrutiny in China since last year for their sales and marketing practices.

The world's most populous country is the third-largest pharmaceutical market, behind the U.S. and Japan, according to data from consulting firm McKinsey & Co., but Beijing has used price caps and other measures to keep medical care affordable.

Price caps will be lifted for 280 medicines made by Western drug companies and 250 Chinese patent drugs, the National Development and Reform Commission, China's economic planning body, said Thursday. The move will affect prices on drugs such as antibiotics, painkillers and vitamins, it said.

The statement said local governments will have until July 1 to unveil details of the plan. In China, local authorities have broad oversight over how drugs are distributed to local hospitals.

Aiming to keep prices low, some manufacturers cut corners on production, exposing consumers to safety risks, said Helen Chen, a Shanghai-based partner and director of L.E.K. Consulting. Many also closed production, creating shortages of low-cost drugs such as thyroid medication.

"It means the [commission] recognizes that forcing prices down and focusing purely on price does sacrifice drug safety, quality and availability," said Ms. Chen.

Several drug makers, including GlaxoSmithKline PLC, didn't immediately respond to requests for comment. Spokeswomen for Sanofi and Pfizer Inc. said that because implementation of the new policy is unclear, it is too early to understand how it will affect their business in China.

The industry was dealt a blow last summer when Chinese authorities accused Glaxo of bribing doctors, hospitals and local officials to increase sales of their drugs. The U.K. company has said some of its employees may have violated Chinese law.

The central government, which began overhauling the country's health-care system in 2009, has until now largely favored pricing caps and has encouraged provincial governments to cut health-care costs and prices. Regulators phased out five years ago premium pricing for a list of "essential drugs" to be available in hospitals.

Chinese leaders want health care to be more accessible and affordable, but there have been unintended consequences in attempting to ensure the lowest prices on drugs. For instance, many pharmaceutical companies registered to sell the thyroid medication Tapazole have halted production in recent years after pricing restrictions squeezed out profits, experts say, creating a shortage. Chinese patients with hyperthyroidism struggled to find the drug and many suffered with increased anxiety, muscle weakness and sleep disorder, according to local media reports.

In 2012, some drug-capsule manufacturers were found to be using industrial gelatin to cut production costs. The industrial gelatin contained the chemical chromium, which can be carcinogenic with frequent exposure, according to the U.S. Centers for Disease Control and Prevention.

"Manufacturers have attempted to save costs, and doing that has meant using lower-quality ingredients," said Ms. Chen.

The pricing reversal won't necessarily alleviate pricing pressure for these drugs, experts say. To get drugs into hospitals, companies must compete in a tendering process at the provincial level, said Justin Wang, also a partner at L.E.K. "It's still unclear how the provinces will react to this new national list," Mr. Wang said.

If provinces don't change their current system, price will remain a key competitive factor for drug makers, said Franck Le Deu, a partner at McKinsey's China division.

"The bottom line is that there may be more safety and more pricing transparency, but the focus intensifies on creating more innovative drugs," Mr. Le Deu said.

—Liyan Qi contributed to this article.

Move Comes After Some Manufacturers Cut Corners on Production

Wall Street Journal, May 8, 2014 1:15 a.m.

http://online.wsj.com/news/articles/SB10001424052702304655304579548933340544044

Beijing

China will scrap caps on retail prices for low-cost medicine and is moving toward free-market pricing for pharmaceuticals, after price controls led to drug quality problems and shortages in the country.

The move could be a welcome one for global pharmaceutical companies, which have been under scrutiny in China since last year for their sales and marketing practices.

The world's most populous country is the third-largest pharmaceutical market, behind the U.S. and Japan, according to data from consulting firm McKinsey & Co., but Beijing has used price caps and other measures to keep medical care affordable.

Price caps will be lifted for 280 medicines made by Western drug companies and 250 Chinese patent drugs, the National Development and Reform Commission, China's economic planning body, said Thursday. The move will affect prices on drugs such as antibiotics, painkillers and vitamins, it said.

The statement said local governments will have until July 1 to unveil details of the plan. In China, local authorities have broad oversight over how drugs are distributed to local hospitals.

Aiming to keep prices low, some manufacturers cut corners on production, exposing consumers to safety risks, said Helen Chen, a Shanghai-based partner and director of L.E.K. Consulting. Many also closed production, creating shortages of low-cost drugs such as thyroid medication.

"It means the [commission] recognizes that forcing prices down and focusing purely on price does sacrifice drug safety, quality and availability," said Ms. Chen.

Several drug makers, including GlaxoSmithKline PLC, didn't immediately respond to requests for comment. Spokeswomen for Sanofi and Pfizer Inc. said that because implementation of the new policy is unclear, it is too early to understand how it will affect their business in China.

The industry was dealt a blow last summer when Chinese authorities accused Glaxo of bribing doctors, hospitals and local officials to increase sales of their drugs. The U.K. company has said some of its employees may have violated Chinese law.

The central government, which began overhauling the country's health-care system in 2009, has until now largely favored pricing caps and has encouraged provincial governments to cut health-care costs and prices. Regulators phased out five years ago premium pricing for a list of "essential drugs" to be available in hospitals.

Chinese leaders want health care to be more accessible and affordable, but there have been unintended consequences in attempting to ensure the lowest prices on drugs. For instance, many pharmaceutical companies registered to sell the thyroid medication Tapazole have halted production in recent years after pricing restrictions squeezed out profits, experts say, creating a shortage. Chinese patients with hyperthyroidism struggled to find the drug and many suffered with increased anxiety, muscle weakness and sleep disorder, according to local media reports.

In 2012, some drug-capsule manufacturers were found to be using industrial gelatin to cut production costs. The industrial gelatin contained the chemical chromium, which can be carcinogenic with frequent exposure, according to the U.S. Centers for Disease Control and Prevention.

"Manufacturers have attempted to save costs, and doing that has meant using lower-quality ingredients," said Ms. Chen.

The pricing reversal won't necessarily alleviate pricing pressure for these drugs, experts say. To get drugs into hospitals, companies must compete in a tendering process at the provincial level, said Justin Wang, also a partner at L.E.K. "It's still unclear how the provinces will react to this new national list," Mr. Wang said.

If provinces don't change their current system, price will remain a key competitive factor for drug makers, said Franck Le Deu, a partner at McKinsey's China division.

"The bottom line is that there may be more safety and more pricing transparency, but the focus intensifies on creating more innovative drugs," Mr. Le Deu said.

—Liyan Qi contributed to this article.

Saturday, December 28, 2013

MRSA Infections, swine effluent lagoons, and farm consolidations

Answering to some comments in a book review, 'In Meat We Trust,' by Maureen Ogle (http://online.wsj.com/news/articles/SB10001424052702303482504579177742158078278), WSJ, Dec. 17, 2013 6:36 p.m. ET:

A recent paper* in a FAO publication summarizes advances in hog manure management. Obviously, the cases mentioned are small in comparison with the great consolidated farms, but even so, there are multiple ways to manage better the effluents and some useful ways to profit from the lagoons/catchments are shown here.

@Mr Evangelista: I got access to the paper** you mentioned. If interested you may ask for it. I'd like, though, to calm down things. As it says other paper*** published at the same time, which it is likely it is the one Mr Blumenthal mentioned:

"In 2011,we estimated the overall number of invasive MRSA infections was 80 461; 31% lower than when estimates were first available in 2005"

The reasons are not well understood (several explanations are offered), but that is not relevant now. The important idea is that despite increasing consolidation of farm operations and an increasing population (from approx 295 million in 2005 to approx 311 million in 2011), there are 31% less MRSA infections.

References

* Intensive and Integrated Farm Systems using Fermentation of Swine Effluent in Brazil. By I. Bergier, E. Soriano, G. Wiedman and A. Kososki. In Biotechnologies at Work for Smallholders: Case Studies from Developing Countries in Crops, Livestock and Fish. Edited by J. Ruane, J.D. Dargie, C. Mba, P. Boettcher, H.P.S. Makkar, D.M. Bartley and A. Sonnino. Food and Agriculture Organization of the United Nations, 2013. http://www.fao.org/docrep/018/i3403e/i3403e00.htm

** High-Density Livestock Operations, Crop Field Application of Manure, and Risk of Community-Associated Methicillin-Resistant Staphylococcus aureus Infection in Pennsylvania. By Joan A. Casey, MA; Frank C. Curriero, PhD, MA; Sara E. Cosgrove,MD, MS; Keeve E. Nachman, PhD, MHS; Brian S. Schwartz, MD,MS. JAMA Intern Med. Vol 173, No. 21, doi:10.1001/jamainternmed.2013.10408

*** National Burden of InvasiveMethicillin-Resistant Staphylococcus aureus Infections, United States, 2011. By Raymund Dantes, MD, MPH; Yi Mu, PhD; Ruth Belflower, RN, MPH; Deborah Aragon, MSPH; Ghinwa Dumyati, MD; Lee H. Harrison, MD; Fernanda C. Lessa, MD; Ruth Lynfield, MD; Joelle Nadle, MPH; Susan Petit, MPH; Susan M. Ray, MD; William Schaffner, MD; John Townes, MD; Scott Fridkin, MD; for the Emerging Infections Program–Active Bacterial Core Surveillance MRSA Surveillance Investigators. JAMA Intern Med. Vol 173, No. 21, doi:10.1001/jamainternmed.2013.10423

Tuesday, July 16, 2013

Trevor Butterworth's Fad Food Nation

Fad Food Nation. By Trevor Butterworth

A skeptical survey of the claims being made about food, health and the environment.

The Wall Street Journal, July 16, 2013, on page A13

online.wsj.com/article/SB10001424127887323823004578593943760620664.html

Excerpts:

Not so long ago, I spoke to a chef who ministers to children attending some of the most elite and expensive schools in America. Why, I asked him, was his company's website larded with almost comical warnings about the lethality of eating genetically modified (GM) food? Did he actually believe this as scientific fact or was he catering to his clientele's spiritual fears? It was simply for the mothers, he said, candidly. They ate it up—or, rather, they had swallowed so many apocalyptic warnings about genetically modified food that he had no choice but to echo their terror. How could they entrust their children to him otherwise? The downside of such dogma, he explained, was cost. Many of the mothers wouldn't agree to their children eating anything less than 100% organic, even if organic food required flying in, as he put it, "apples from Cuba."

Mr. Butterworth is a contributor at Newsweek and editor at large for STATS.org.

A skeptical survey of the claims being made about food, health and the environment.

The Wall Street Journal, July 16, 2013, on page A13

online.wsj.com/article/SB10001424127887323823004578593943760620664.html

Excerpts:

Not so long ago, I spoke to a chef who ministers to children attending some of the most elite and expensive schools in America. Why, I asked him, was his company's website larded with almost comical warnings about the lethality of eating genetically modified (GM) food? Did he actually believe this as scientific fact or was he catering to his clientele's spiritual fears? It was simply for the mothers, he said, candidly. They ate it up—or, rather, they had swallowed so many apocalyptic warnings about genetically modified food that he had no choice but to echo their terror. How could they entrust their children to him otherwise? The downside of such dogma, he explained, was cost. Many of the mothers wouldn't agree to their children eating anything less than 100% organic, even if organic food required flying in, as he put it, "apples from Cuba."

Mr. Butterworth is a contributor at Newsweek and editor at large for STATS.org.

Wednesday, September 19, 2012

New Report Aims to Improve the Science Behind Regulatory Decision-Making

New Report Aims to Improve the Science Behind Regulatory Decision-Making

http://www.americanchemistry.com/Media/PressReleasesTranscripts/ACC-news-releases/New-Report-Aims-to-Improve-the-Science-Behind-Regulatory-Decision-Making.html

WASHINGTON, D.C. (September 18, 2012) – Scientists and policy experts from industry, government, and nonprofit sectors reached consensus on ways to improve the rigor and transparency of regulatory decision-making in a report being released today. The Research Integrity Roundtable, a cross-sector working group convened and facilitated by The Keystone Center, an independent public policy organization, is releasing the new report to improve the scientific analysis and independent expert reviews which underpin many important regulatory decisions. The report, Model Practices and Procedures for Improving the Use of Science in Regulatory Decision-Making, builds on the work of the Bipartisan Policy Center (BPC) in its 2009 report Science for Policy Project: Improving the Use of Science in Regulatory Policy.

"Americans need to have confidence in a U.S. regulatory system that encourages rational, science-based decision-making," said Mike Walls, Vice President of Regulatory and Technical Affairs for the American Chemistry Council (ACC), one of the sponsors of the Keystone Roundtable. "For this report, a broad spectrum of stakeholders came together to identify and help resolve some of the more troubling inconsistencies and roadblocks at the intersection of science and regulatory policy."

Controversies surrounding a regulatory decision often arise over the composition and transparency of scientific advisory panels and the scientific analysis used to support such decisions. The Roundtable's report is the product of 18 months of deliberations among experts from advocacy groups, professional associations and industry, as well as liaisons from several key Federal agencies. The report centers on two main public policy challenges that lead to controversy in the regulatory process: appointments of scientific experts, and the conduct of systematic scientific reviews.

The Roundtable's recommendations aim to improve the selection process for scientists on federal advisory panels and the scientific analysis used to draw conclusions that inform policy. The report seeks to maximize transparency and objectivity at every step in the regulatory decision-making process by informing the formation of scientific advisory committees and use of systematic reviews. The Roundtable's report offers specific recommendations for improving expert panel selection by better addressing potential conflicts of interest and bias. In addition, the report recommends ways to improve systematic reviews of scientific studies by outlining a step-by-step process, and by calling for clearer criteria to determine the relevance and credibility of studies.

"Conflicted experts and poor scientific assessments threaten the scientific integrity of agency decision making as well as the public's faith in agencies to protect their health and safety," said Francesca Grifo, Senior Scientist and Science Policy Fellow for the Union of Concerned Scientists. "Given the abundance of inflamed partisan dialogue around regulatory issues, it was refreshing to be a part of a rational and respectful roundtable. If adopted by agencies, the changes recommended in the report have the potential to reduce the ability of narrow interests to weaken regulations' power to protect the public good."

The Keystone Center and members of the Research Integrity Roundtable welcome additional conversations and dialogue on the matters explored in and recommendations presented in this report.

For more information, access the Roundtable's website at: www.Keystone.org/researchintegrity.

Tuesday, May 15, 2012

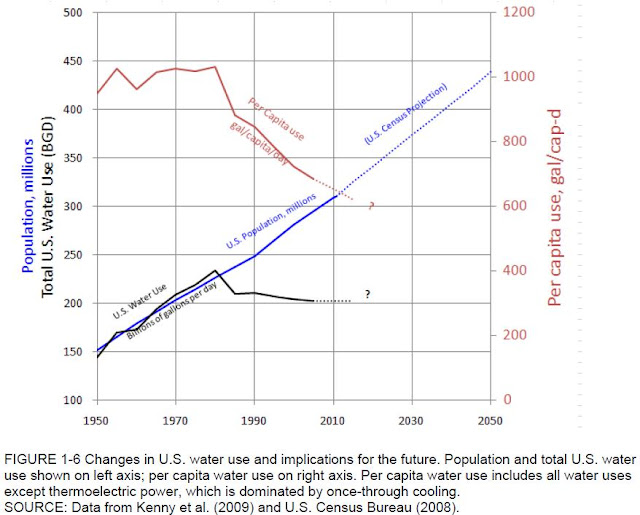

Changes in U.S. water use and implications for the future

It is interesting to see some data in Water Reuse: Expanding the Nation's Water Supply Through Reuse of Municipal Wastewater (http://www.nap.edu/catalog.php?record_id=13303), a National Research Council publication.

See for example figure 1-6, p 17, changes in U.S. water use and implications for the future:

See for example figure 1-6, p 17, changes in U.S. water use and implications for the future:

Friday, October 21, 2011

The Case Against Global-Warming Skepticism

The Case Against Global-Warming Skepticism. By Richard A Muller

There were good reasons for doubt, until now.

http://online.wsj.com/article/SB10001424052970204422404576594872796327348.html

WSJ, Oct 21, 2011

Are you a global warming skeptic? There are plenty of good reasons why you might be.

As many as 757 stations in the United States recorded net surface-temperature cooling over the past century. Many are concentrated in the southeast, where some people attribute tornadoes and hurricanes to warming.

The temperature-station quality is largely awful. The most important stations in the U.S. are included in the Department of Energy's Historical Climatology Network. A careful survey of these stations by a team led by meteorologist Anthony Watts showed that 70% of these stations have such poor siting that, by the U.S. government's own measure, they result in temperature uncertainties of between two and five degrees Celsius or more. We do not know how much worse are the stations in the developing world.

Using data from all these poor stations, the U.N.'s Intergovernmental Panel on Climate Change estimates an average global 0.64ºC temperature rise in the past 50 years, "most" of which the IPCC says is due to humans. Yet the margin of error for the stations is at least three times larger than the estimated warming.

We know that cities show anomalous warming, caused by energy use and building materials; asphalt, for instance, absorbs more sunlight than do trees. Tokyo's temperature rose about 2ºC in the last 50 years. Could that rise, and increases in other urban areas, have been unreasonably included in the global estimates? That warming may be real, but it has nothing to do with the greenhouse effect and can't be addressed by carbon dioxide reduction.

Moreover, the three major temperature analysis groups (the U.S.'s NASA and National Oceanic and Atmospheric Administration, and the U.K.'s Met Office and Climatic Research Unit) analyze only a small fraction of the available data, primarily from stations that have long records. There's a logic to that practice, but it could lead to selection bias. For instance, older stations were often built outside of cities but today are surrounded by buildings. These groups today use data from about 2,000 stations, down from roughly 6,000 in 1970, raising even more questions about their selections.

On top of that, stations have moved, instruments have changed and local environments have evolved. Analysis groups try to compensate for all this by homogenizing the data, though there are plenty of arguments to be had over how best to homogenize long-running data taken from around the world in varying conditions. These adjustments often result in corrections of several tenths of one degree Celsius, significant fractions of the warming attributed to humans.

And that's just the surface-temperature record. What about the rest? The number of named hurricanes has been on the rise for years, but that's in part a result of better detection technologies (satellites and buoys) that find storms in remote regions. The number of hurricanes hitting the U.S., even more intense Category 4 and 5 storms, has been gradually decreasing since 1850. The number of detected tornadoes has been increasing, possibly because radar technology has improved, but the number that touch down and cause damage has been decreasing. Meanwhile, the short-term variability in U.S. surface temperatures has been decreasing since 1800, suggesting a more stable climate.

Without good answers to all these complaints, global-warming skepticism seems sensible. But now let me explain why you should not be a skeptic, at least not any longer.

Over the last two years, the Berkeley Earth Surface Temperature Project has looked deeply at all the issues raised above. I chaired our group, which just submitted four detailed papers on our results to peer-reviewed journals. We have now posted these papers online at www.BerkeleyEarth.org to solicit even more scrutiny.

Our work covers only land temperature—not the oceans—but that's where warming appears to be the greatest. Robert Rohde, our chief scientist, obtained more than 1.6 billion measurements from more than 39,000 temperature stations around the world. Many of the records were short in duration, and to use them Mr. Rohde and a team of esteemed scientists and statisticians developed a new analytical approach that let us incorporate fragments of records. By using data from virtually all the available stations, we avoided data-selection bias. Rather than try to correct for the discontinuities in the records, we simply sliced the records where the data cut off, thereby creating two records from one.

We discovered that about one-third of the world's temperature stations have recorded cooling temperatures, and about two-thirds have recorded warming. The two-to-one ratio reflects global warming. The changes at the locations that showed warming were typically between 1-2ºC, much greater than the IPCC's average of 0.64ºC.

To study urban-heating bias in temperature records, we used satellite determinations that subdivided the world into urban and rural areas. We then conducted a temperature analysis based solely on "very rural" locations, distant from urban ones. The result showed a temperature increase similar to that found by other groups. Only 0.5% of the globe is urbanized, so it makes sense that even a 2ºC rise in urban regions would contribute negligibly to the global average.

What about poor station quality? Again, our statistical methods allowed us to analyze the U.S. temperature record separately for stations with good or acceptable rankings, and those with poor rankings (the U.S. is the only place in the world that ranks its temperature stations). Remarkably, the poorly ranked stations showed no greater temperature increases than the better ones. The mostly likely explanation is that while low-quality stations may give incorrect absolute temperatures, they still accurately track temperature changes.

When we began our study, we felt that skeptics had raised legitimate issues, and we didn't know what we'd find. Our results turned out to be close to those published by prior groups. We think that means that those groups had truly been very careful in their work, despite their inability to convince some skeptics of that. They managed to avoid bias in their data selection, homogenization and other corrections.

Global warming is real. Perhaps our results will help cool this portion of the climate debate. How much of the warming is due to humans and what will be the likely effects? We made no independent assessment of that.

Mr. Muller is a professor of physics at the University of California, Berkeley, and the author of "Physics for Future Presidents" (W.W. Norton & Co., 2008).

There were good reasons for doubt, until now.

http://online.wsj.com/article/SB10001424052970204422404576594872796327348.html

WSJ, Oct 21, 2011

Are you a global warming skeptic? There are plenty of good reasons why you might be.

As many as 757 stations in the United States recorded net surface-temperature cooling over the past century. Many are concentrated in the southeast, where some people attribute tornadoes and hurricanes to warming.

The temperature-station quality is largely awful. The most important stations in the U.S. are included in the Department of Energy's Historical Climatology Network. A careful survey of these stations by a team led by meteorologist Anthony Watts showed that 70% of these stations have such poor siting that, by the U.S. government's own measure, they result in temperature uncertainties of between two and five degrees Celsius or more. We do not know how much worse are the stations in the developing world.

Using data from all these poor stations, the U.N.'s Intergovernmental Panel on Climate Change estimates an average global 0.64ºC temperature rise in the past 50 years, "most" of which the IPCC says is due to humans. Yet the margin of error for the stations is at least three times larger than the estimated warming.

We know that cities show anomalous warming, caused by energy use and building materials; asphalt, for instance, absorbs more sunlight than do trees. Tokyo's temperature rose about 2ºC in the last 50 years. Could that rise, and increases in other urban areas, have been unreasonably included in the global estimates? That warming may be real, but it has nothing to do with the greenhouse effect and can't be addressed by carbon dioxide reduction.

Moreover, the three major temperature analysis groups (the U.S.'s NASA and National Oceanic and Atmospheric Administration, and the U.K.'s Met Office and Climatic Research Unit) analyze only a small fraction of the available data, primarily from stations that have long records. There's a logic to that practice, but it could lead to selection bias. For instance, older stations were often built outside of cities but today are surrounded by buildings. These groups today use data from about 2,000 stations, down from roughly 6,000 in 1970, raising even more questions about their selections.

On top of that, stations have moved, instruments have changed and local environments have evolved. Analysis groups try to compensate for all this by homogenizing the data, though there are plenty of arguments to be had over how best to homogenize long-running data taken from around the world in varying conditions. These adjustments often result in corrections of several tenths of one degree Celsius, significant fractions of the warming attributed to humans.

And that's just the surface-temperature record. What about the rest? The number of named hurricanes has been on the rise for years, but that's in part a result of better detection technologies (satellites and buoys) that find storms in remote regions. The number of hurricanes hitting the U.S., even more intense Category 4 and 5 storms, has been gradually decreasing since 1850. The number of detected tornadoes has been increasing, possibly because radar technology has improved, but the number that touch down and cause damage has been decreasing. Meanwhile, the short-term variability in U.S. surface temperatures has been decreasing since 1800, suggesting a more stable climate.

Without good answers to all these complaints, global-warming skepticism seems sensible. But now let me explain why you should not be a skeptic, at least not any longer.

Over the last two years, the Berkeley Earth Surface Temperature Project has looked deeply at all the issues raised above. I chaired our group, which just submitted four detailed papers on our results to peer-reviewed journals. We have now posted these papers online at www.BerkeleyEarth.org to solicit even more scrutiny.

Our work covers only land temperature—not the oceans—but that's where warming appears to be the greatest. Robert Rohde, our chief scientist, obtained more than 1.6 billion measurements from more than 39,000 temperature stations around the world. Many of the records were short in duration, and to use them Mr. Rohde and a team of esteemed scientists and statisticians developed a new analytical approach that let us incorporate fragments of records. By using data from virtually all the available stations, we avoided data-selection bias. Rather than try to correct for the discontinuities in the records, we simply sliced the records where the data cut off, thereby creating two records from one.

We discovered that about one-third of the world's temperature stations have recorded cooling temperatures, and about two-thirds have recorded warming. The two-to-one ratio reflects global warming. The changes at the locations that showed warming were typically between 1-2ºC, much greater than the IPCC's average of 0.64ºC.

To study urban-heating bias in temperature records, we used satellite determinations that subdivided the world into urban and rural areas. We then conducted a temperature analysis based solely on "very rural" locations, distant from urban ones. The result showed a temperature increase similar to that found by other groups. Only 0.5% of the globe is urbanized, so it makes sense that even a 2ºC rise in urban regions would contribute negligibly to the global average.

What about poor station quality? Again, our statistical methods allowed us to analyze the U.S. temperature record separately for stations with good or acceptable rankings, and those with poor rankings (the U.S. is the only place in the world that ranks its temperature stations). Remarkably, the poorly ranked stations showed no greater temperature increases than the better ones. The mostly likely explanation is that while low-quality stations may give incorrect absolute temperatures, they still accurately track temperature changes.

When we began our study, we felt that skeptics had raised legitimate issues, and we didn't know what we'd find. Our results turned out to be close to those published by prior groups. We think that means that those groups had truly been very careful in their work, despite their inability to convince some skeptics of that. They managed to avoid bias in their data selection, homogenization and other corrections.

Global warming is real. Perhaps our results will help cool this portion of the climate debate. How much of the warming is due to humans and what will be the likely effects? We made no independent assessment of that.

Mr. Muller is a professor of physics at the University of California, Berkeley, and the author of "Physics for Future Presidents" (W.W. Norton & Co., 2008).

Tuesday, October 4, 2011

White House: Now is Not the Time to Wave the White Flag on Clean Energy Jobs

Now is Not the Time to Wave the White Flag on Clean Energy Jobs. Blog post from Dan Pfeiffer, White House Communications Director

http://www.whitehouse.gov/blog/2011/10/04/now-not-time-wave-white-flag-clean-energy-jobs

October 04, 2011

This morning, Chairman Cliff Stearns, who leads the House Energy and Commerce Subcommittee on Oversight and Investigations, told NPR that "We can't compete with China to make solar panels and wind turbines."

This comment reflects exactly the sort of counterproductive defeatism that Energy Secretary Steven Chu warned against this weekend when he spoke to a group of America’s most promising young solar innovators:

“The United States faces a choice today: Will we sit on the sidelines and fall behind or will we play to win the clean energy race? Some say this is a race America can’t win. They’re ready to wave the white flag and declare defeat… Others say this is a race America shouldn’t even be in. They say we can’t afford to invest in clean energy. I say we can’t afford not to.

“It’s not enough for our country to invent clean energy technologies – we have to make them and use them too. Invented in America, made in America, and sold around the world – that’s how we’ll create good jobs and lead in the 21st century.”

The race for clean energy jobs and industries is on – and it is a race well worth winning. The International Energy Agency projects that in the coming decades, solar power could grow to more than 20 percent of the world’s electricity.

Conservatively, this means that there is an economic opportunity worth trillions of dollars for whichever countries claim the lead. The global market for wind turbines is also growing exponentially.

But it’s not just the vast potential of jobs tomorrow – these industries employ a growing number of Americans today. In fact, business groups estimate that America’s solar industry accounts for about 100,000 jobs and the wind industry employs 75,000. Should we simply tell those workers that we’ve given up on them?

A study released last month showed that, in spite of the intense global competition, the U.S. remains a net global exporter of solar technology – with $5.6 billion in exports and an overall positive trade balance of $1.8 billion.

It is certainly true that China is playing to win. Last year alone, China offered its solar manufacturers $30 billion in government financing, vastly exceeding the U.S. investment. And China has overtaken the United States market share in solar power – a technology we invented.

Chairman Stearns and other members of his party in Congress believe that America cannot, or should not, try to compete for jobs in a cutting edge and rapidly growing industry. We simply disagree: the answer to this challenge is not to wave the white flag and give up on American workers. America has never declared defeat after a single setback – and we shouldn’t start now.

America’s entrepreneurs and innovators are still the very best in the world. Our workers are second to none – and we have never been afraid of a challenge. It’s time to do what we’ve always done in the face of a tough competitor: roll up our sleeves and recapture the lead.

http://www.whitehouse.gov/blog/2011/10/04/now-not-time-wave-white-flag-clean-energy-jobs

October 04, 2011

This morning, Chairman Cliff Stearns, who leads the House Energy and Commerce Subcommittee on Oversight and Investigations, told NPR that "We can't compete with China to make solar panels and wind turbines."

This comment reflects exactly the sort of counterproductive defeatism that Energy Secretary Steven Chu warned against this weekend when he spoke to a group of America’s most promising young solar innovators:

“The United States faces a choice today: Will we sit on the sidelines and fall behind or will we play to win the clean energy race? Some say this is a race America can’t win. They’re ready to wave the white flag and declare defeat… Others say this is a race America shouldn’t even be in. They say we can’t afford to invest in clean energy. I say we can’t afford not to.

“It’s not enough for our country to invent clean energy technologies – we have to make them and use them too. Invented in America, made in America, and sold around the world – that’s how we’ll create good jobs and lead in the 21st century.”

The race for clean energy jobs and industries is on – and it is a race well worth winning. The International Energy Agency projects that in the coming decades, solar power could grow to more than 20 percent of the world’s electricity.

Conservatively, this means that there is an economic opportunity worth trillions of dollars for whichever countries claim the lead. The global market for wind turbines is also growing exponentially.

But it’s not just the vast potential of jobs tomorrow – these industries employ a growing number of Americans today. In fact, business groups estimate that America’s solar industry accounts for about 100,000 jobs and the wind industry employs 75,000. Should we simply tell those workers that we’ve given up on them?

A study released last month showed that, in spite of the intense global competition, the U.S. remains a net global exporter of solar technology – with $5.6 billion in exports and an overall positive trade balance of $1.8 billion.

It is certainly true that China is playing to win. Last year alone, China offered its solar manufacturers $30 billion in government financing, vastly exceeding the U.S. investment. And China has overtaken the United States market share in solar power – a technology we invented.

Chairman Stearns and other members of his party in Congress believe that America cannot, or should not, try to compete for jobs in a cutting edge and rapidly growing industry. We simply disagree: the answer to this challenge is not to wave the white flag and give up on American workers. America has never declared defeat after a single setback – and we shouldn’t start now.

America’s entrepreneurs and innovators are still the very best in the world. Our workers are second to none – and we have never been afraid of a challenge. It’s time to do what we’ve always done in the face of a tough competitor: roll up our sleeves and recapture the lead.

Friday, September 30, 2011

EPA Inspector General Statement on Greenhouse Gases Endangerment Finding Report - Data Quality Processes

EPA Inspector General Statement on Greenhouse Gases Endangerment Finding Report - Data Quality Processes

Press Statement - U.S. Environmental Protection Agency

For Immediate Release

Office of Inspector General

Washington, D.C., September 28, 2011Contact: John Manibusan. Phone: (202) 566-2391

http://www.epa.gov/oig/reports/2011/IG_Statement_Greenhouse_Gases_Endangerment_Report.pdf

WASHINGTON, D.C. – Statement of Inspector General Arthur A. Elkins, Jr., on the Office of Inspector General (OIG) report Procedural Review of EPA’s Greenhouse Gases Endangerment Finding Data Quality Processes:

Press Statement - U.S. Environmental Protection Agency

For Immediate Release

Office of Inspector General